Some of you will remember last year's amazing insights from the team at Capital One and the great Prem Natarajan, PhD. Unfortunately I missed this year's Cap One presentation, but I did hear from the senior technology executives from RBC, Wells Fargo, and BNY. This panel talked through four key questions for financial services companies on agentic AI at scale.

- How are financial services companies moving agentic use cases to production?

- What special considerations are there for agentic AI in highly regulated spaces?

- What metrics are being used to measure return on investment?

- What predictions do you have about the next year in agentic AI?

How Are Financial Services Companies Moving Agentic Use Cases to Production?

At Royal Bank of Canada, they have "elevated" AI into a top enterprise strategic function while embedding its use cases into business lines. I'm sure there are tradeoffs here and I wonder how they handle the likely proliferation of business units bringing their own AI (BYOAI).

RBC Went Deep

The "showcase" AI use cases here were in the capital markets research space. Their core AI platform is called AIDEN, and started ten years ago as a AI-powered electronic trading system that used reinforcement learning (RL) to continuously learn from market conditions and optimize trades in real time. Yeah, that sounds hard. They have extended it to become a broader AI platform used across capital markets for trading algorithms, research automation, and insight generation for clients. To do agentic AI in the cap markets space at scale is a pretty impressive feat even though the specific use cases implemented feel to me like fairly classic deep research agents, which are pretty mature.

BNY Went Wide

Bank of New York described their AI strategy as "AI for everyone, everywhere, for everything". They described this as meaning AI should be used by every employee for every interaction across every process, which is a pretty bold statement. I have questions about the actual viability of this as a practical strategy but the point was well taken. I continue to this we will have many use cases and applications where the accelerated delivery of classic deterministic solutions is the right way to go.

They had some use cases that made my ears perk up, noting main agentic use cases in dynamic asset pricing, lock box processing, data insight generation, and, of course, data extraction. The lock box one I have questions about, I'm guessing it's exception research and illogical condition mining, and not actually payment processing but they did not get into this. They take a platform mindset, with use cases deployed across a range of AI platforms. It seemed to me that RBC went really deep in one area, where BNY went wide. Both valid strategies, just different.

Wells Fargo Went Customer

Wells Fargo pointed out their biggest hurdle as the "verifiability of agent actions at scale" in an automated way, which is one I have heard from at least one enterprise leader in mortgage. They started their agentic journey with "Fargo", which began as a virtual assistant. They extended it into intent extraction and classification, next taking smaller baby steps for specific agent capabilities like make a payment and initiate a claim. Now they have moved into personalized capabilities with an emphasis on the verifiability of results. WF noted that if we can verify the result, then we can automate the action.

What Special Considerations Are There for Agentic AI in Highly Regulates Spaces?

We really heard hear two main themes - verifiable risk management focus and culture.

Risk and Model Management

We heard multiple times about the need for verifiable risk management frameworks, evidence that the framework is in place, and actually being able to prove that a particular implementation actually followed the framework and risk was mitigated. That sounds really hard. This tells me that it's not enough to have guardrails implemented at a use case level. Yes, that's important guardrails need to be part of a connected framework.

Think about providing evidence on some subset of 40,000,000 logins a day (yes, that's a real number), being able to prove that the risk management framework was followed. Identifying which of these interactions was agentic, what use case it implemented, and the specific controls and guardrails triggered.

So guardrails need to be part of a larger, evidenceable strategy - which is much harder than implementing risks management in a single use case. We heard from one leader that they have had the same standing weekly meeting with their legal and risk partners for 2.5 years. Imagine the discipline and forethought that takes. Wow. Every week for years.

We also heard about model management but, again, with a focus on the scale consideration of how model management fits into a broader systems-based approach. Evaluating, for each model choice, the degree to which the model increases the risk in the overall system or decreases it. Looking at the materiality of the risk, the complexity of the model, its degree of openness and how explainable it is (or is not - black box AI). Finally, looking at the degree of reliance upon AI within market infrastructure.

Culture

Of course we heard about process reimagination and the need to rethink the whole problem. Culture as a deeply ingrained attitude towards rethinking a process as a whole. This was described a a move from the trend of "I have AI magic, where can I use it?" to "what does AI-native actually mean?". For example, one of the panelists described having 1700 use cases defined in 2024, with AI "all over the place" and the need to aggregate.

I loved this statement, and I've heard it from the OCC as well - there is a big cost to playing it safe. The new comptroller of the currency expressed this sentiment last year as "not innovating is a big risk". I wonder if these attitudes are related. You need one perspective to allow the other. This statement came on the heels of another gem: "if you need to get really big things done, you need a small team with the right thinker/doer ratio and the right leader". And this one: "keep going - keep breaking glass".

We heard culture as the long pole in the tent, with one panelist noting that "99% of employees have gone through AI training". I'm not sure that's the greatest success measure (attendance is not the same as application), but I do have to give him credit for measuring something.

What Metrics are Being Used to Measure Return on Investment?

Bit of a mixed bag here, and what is impressive is that agentic AI was implemented at scale at all. Again, my $0.02 is that the use cases themselves are not profound. What is profound is how hard it must have been to get the use cases implemented across millions of interactions under intense regulatory scrutiny. Here is a list of all the metrics I heard about from these leaders:

- # people trained

- # agentic uses out of total interactions

- % time reduction in time spent producing analysis

- Revenue per managing director

- Clients covered (out of 1500 total)

- Reduction in time spend producing credit memos (from 3-4 weeks to 1-2 days)

- Call "deflection" meaning inquiries contained that did not become calls - Wells Fargo noted that in the 1.5 years they have had fargo, they have has 500M Fargo interactions, with between 2 two and three millions calls deflected.

- Complex account opening from 18 days to 15 minutes

- 25% increase in developer "burn rate"

- # platform capabilities

- Training breadth

- Degree of organizational enablement

- How fast a use case is implemented from concept to cash

- Risk capabilities implemented in the automated pipeline

What Predictions Do You Have About the Next year in Agentic AI?

Themes here were continuous agentic loops, process reimagination, and pace of change.

- Agentic loops. Certainly this is an extension of the OpenClaw mania that we heard so much about throughout the whole conference. The idea that we will see more and more agents that loop continuously, and based on self-reflection, improve themselves.

- Process reimagination. The statement here was "banks that leverage and redesign with agents will pull away from the pack in the next year".

- Pace of change. As training and inference converge, the pace of change will be even faster. That we will see the "bank as a system that learns every day and becomes even more personalized".

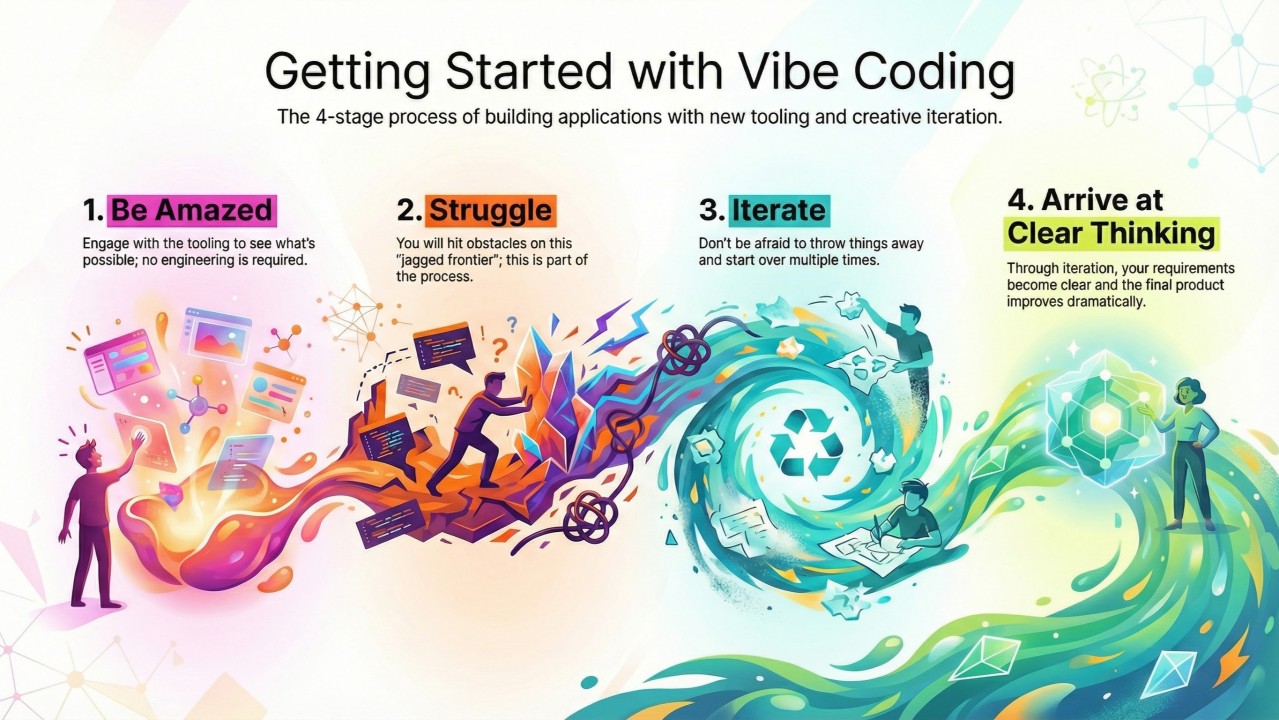

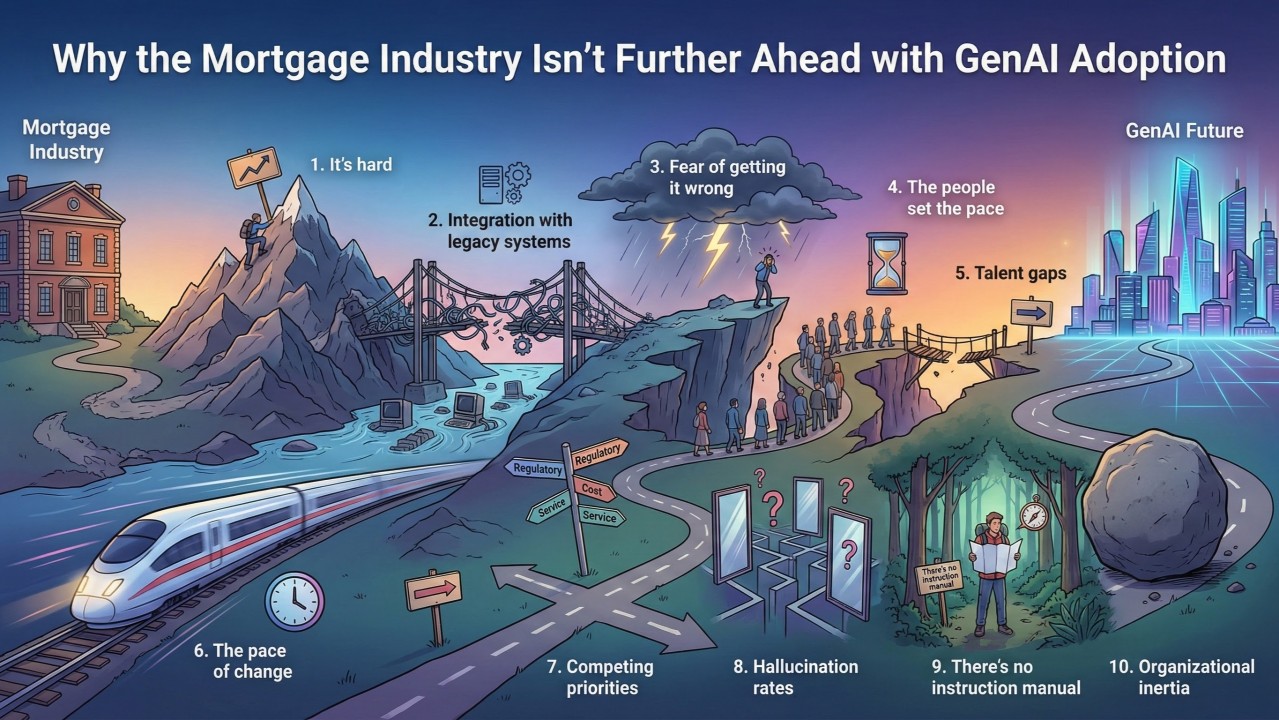

What this Means to Us in Mortgage

This was a really cool panel because it was real people talking about real, regulated AI at real scale. Without a ton of time to reflect this morning, I think my main takeaway for us is that the use cases people (are willing to) talk about at scale are not super profound, which is not to say they are uninteresting or trivial to implement. What I heard was a lot of useful, customer or operator enhancing solutions to problems we have today. With all the agent mania, I think the message here is, again, to scope the problem tight and iterate. I will also say again that this is not where the differentiation will ultimately come from.

We really have two paths to take simultaneously. The first is about being faster. Whatever we do today, do it faster. For a time, there will be advantage here. At the same time we have to look at redesigning a mortgage ecosystem that is specifically designed to be accelerated. Just like the innovation of accelerated computing that changed everything, so too will accelerated mortgage change everything. Mortgage is designed to be slow. It's a big, hairy decision where a lot of money changes hands and dreams are made. It's heavily regulated. the processes and technology are outdated. These are all natural throttles to respect and overcome, but this acceleration - native acceleration - is where the real edge will come.

By Tela Mathias, CTO and Chief Nerd at PhoenixTeam