You’ve heard me talk before about how coming to NVIDIA especially is like stepping into the future. The robots, the level of technical acumen, the global perspective, and, of course, the tech. This year I found myself, well, depressed. AI is eating everything, absolutely everything. Most products out there today will be eaten by either (1) a foundation model provider, (2) a fantastically well-funded, AI -native application provider, or (3) a mega-consultancy. I found myself feeling very excluded. I wondered about how we fit in and what version of the future includes us. It was a little bit defeating. But we power on and take this as a moment to adapt our original pivot and think very carefully about what our niche is in the AI future.

Everything is about tokens to Jensen. It was last year and it was this year again. Tokens, as you know if you’ve taken one of my classes, are numerical representations of words or parts of words. They are the language of generative AI. The machine of AI as we know it today runs on tokens and ultimately, Jensen’s perspective is that every company’s success in the AI future is based on how they optimize the value associated with these tokens. If we traverse the AI stack, it starts at the bottom with power and ends at the top with value. And tokens are the digital fuel.

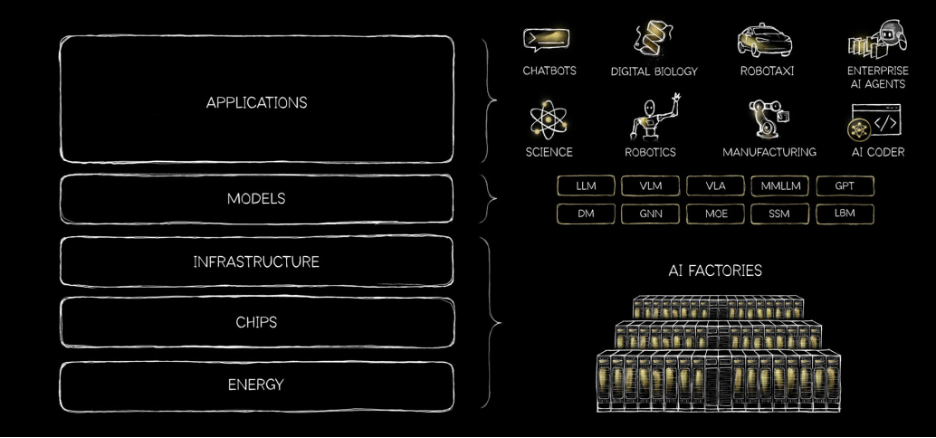

For context, the “AI stack” is a five-layer cake with land, power and shell at the bottom. This is the level of energy and the shelter for energy. The next level is compute silicon (microchips), which turns electricity into work. Next is infrastructure (platforms), in the NVIDIA context you can think software and libraries (CUDA), integrated systems, and AI factories. Only then do we get to models, the actual embodied algorithms, compute, and systems that generate insights. And finally, the application layer – this is the layer where value is created. The model and application layers are the ones that are eating everything.

NVIDIA describes itself as a platform company, so let’s talk about platforms for a minute. At the software and libraries level, we have CUDA, which stands for Compute Unified Device Architecture and is a parallel computing platform and programming model developed by NVIDIA that allows software to use NVIDIA graphical processing units (GPUs) for general-purpose processing. CUDA was that original NVIDIA breakthrough 25 years ago that has powered accelerated computing as we know it today. Then we have systems, those integrated machines and infrastructures that NVIDIA sells (that are effectively powering all of AI today globally). And then AI factories, a newer Jensen metaphor, these are large scale infrastructure solutions that output, yes, tokens.

Of course, it wouldn’t be GTC without a discussion of the flywheel powered by CUDA. CUDA has a massive installed base attracting developers who create breakthroughs that power the ecosystem. And the flywheel continues.

It all started with video games and GeForce, invented 25 years ago. Jenson’s joke was that “your parents paid for you to become an NVIDIA customer”, and he’s not wrong. A graphics card every year was an investment in the future. From pixel shader to the big bang of AI. From here, he spent a lot of time talking about structured data as the foundation of trustworthy AI, the ground truth.

Of note, the largest number of participants at GTC this year are from the financial services sector. NVIDIA identifies nine industry verticals – automotive, financial services, healthcare and life sciences, industrial, media and entertainment, quantum, retail and supply chain, robotics, and telco. This to me is a very strong (additional) signal that AI is coming for mortgage. I mostly see this in how we compete in the services space. I routinely find myself competing with the likes of McKinsey and Boston Consulting Group. We also see that skills dropped by foundation model providers are wiping out whole products. These is a tail to this.

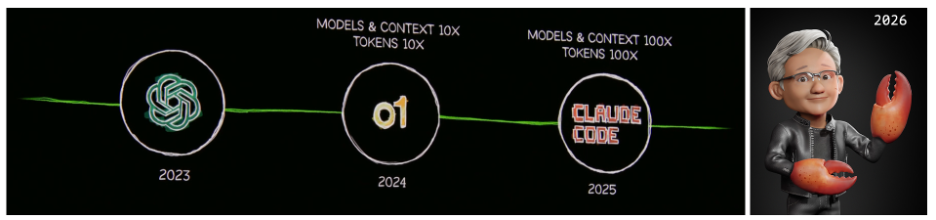

In terms of where we are, Jensen looked back on 2023 (which was really 2022) as starting it all with OpenAI and ChatGPT. Then in 2024 reasoning AI came out, also OpenAI, and then Claude Code in 2025. So the journey is AI that could generate, to AI that could perceive, and finally AI that could do work. This brings us to the inflection point of inference. The inference inflection has arrived, and it was made that much more significant by the Open Claw moment we are having now in 2026 (which really started in November 2025, when Open Claw dropped).

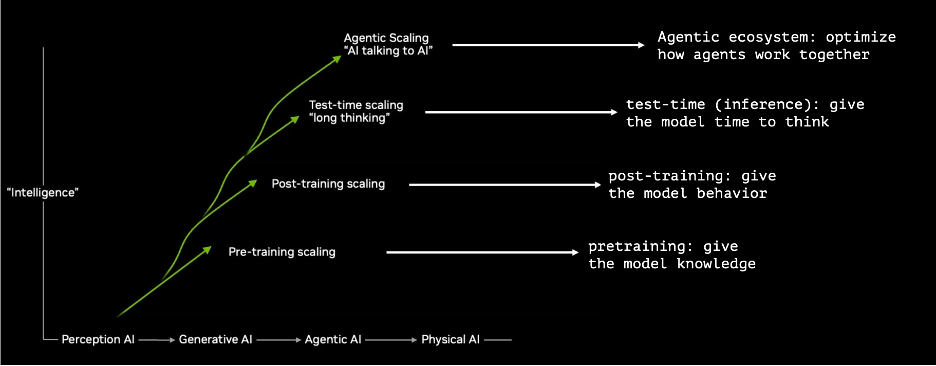

Jensen introduced a new scaling law, so we do have to talk briefly about them. Let me explain them first with the one thing you have to know. More Compute = Better AI. That’s it.

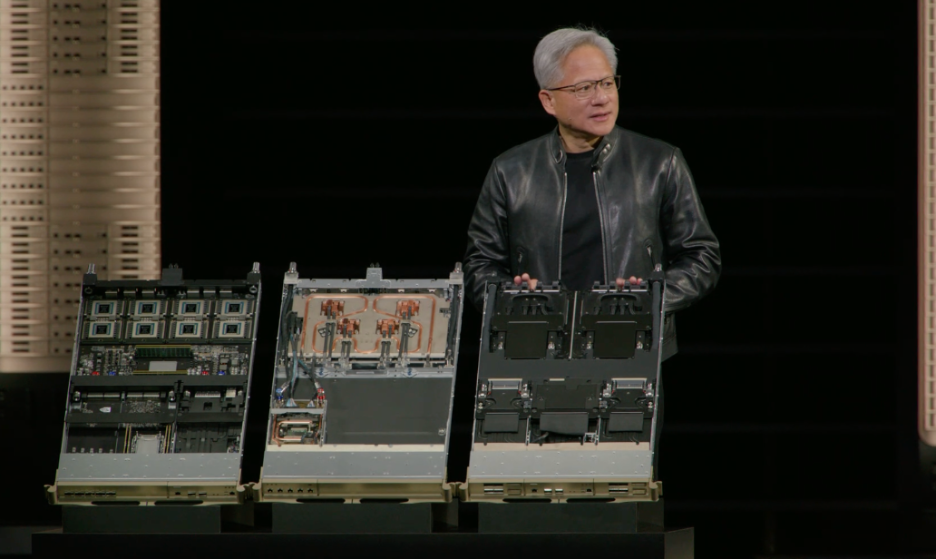

And then there were all the chip and infrastructure announcements. Yawn. Yes, they are awe inspiring and I’m sure they are quite important, but this is always where you lose me during the keynote. I can only hang in for so long and the chips is where I reach my full AI saturation.

Notwithstanding significant, valid criticism of NVIDIA and Jensen in the geopolitical sphere, I am still a (somewhat guarded) Jensen superfan. I am always in awe of Jensen’s ability and willingness to pivot. He described the decision to rearchitect Hopper, right while Hopper was in its prime adoption. But I guess when you have almost five trillion in market cap, that’s a much easier decision than when you are scratching to make a living.

By Tela Gallagher Mathias, CTO at PhoenixTeam